A not-so-furry dog to help the visually impaired

This story was produced by our colleagues at the BBC.

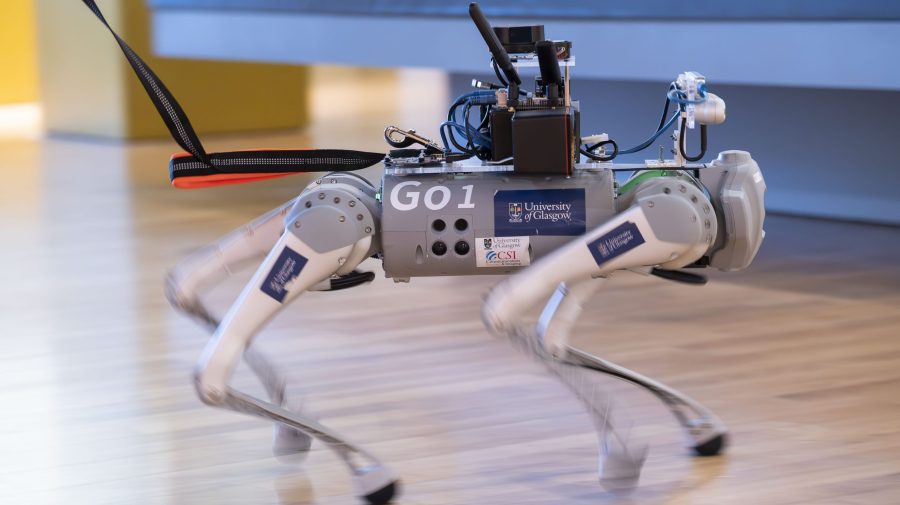

Blind and partially sighted people could soon get help to find their way around from robot guide dogs. A team from the University of Glasgow in Scotland is developing RoboGuide, an AI-powered four-legged robot to help support some of the 2.2 billion people around the world who live with sight loss.

The academics are working with industry and two charities on the project, which they hope will be available in the coming years.

Dr. Wasim Ahmad is part of the team at the university’s James Watt School of Engineering.

“Our objective is to develop it in such a way that blind and partially-sighted people can rely on it, they can trust its ability to take them safely from point A to point B, to navigate safely indoor spaces, like a very busy shopping center, or exhibition center,” he said. “One thing which I think we have integrated here, which they will enjoy, is the interactivity.”

The robot uses large language model technology, giving it the ability to understand questions and comments, and reply to them.

“They [the users] don’t only rely on other people to explain or help them for shopping or some other things,” added Ahmad. “If they want to buy something and they can’t find it, if they want to read label, this dog can help them to read.”

It also uses a series of sophisticated sensors to accurately map and assess its surroundings. Software developed by the team can help the robodog learn the best routes between locations and interpret sensor data in real-time to help it avoid moving obstacles.

Dr. Ahmad said RoboGuide has been well-received by human handlers in tests. But can it provide the bonding and companionship offered by a real dog? Not yet, said Dr. Ahmad, but his team are working on it.

“At our center we are also researching how a robot can understand human emotion and then respond to it,” he said. “That’s a separate search but in the long run that’s what we will be also be integrating – how it can read and understand from your voice and also from your face. It can try to detect some of the emotions and respond to them.”

The future of this podcast starts with you.

Every day, the “Marketplace Tech” team demystifies the digital economy with stories that explore more than just Big Tech. We’re committed to covering topics that matter to you and the world around us, diving deep into how technology intersects with climate change, inequity, and disinformation.

As part of a nonprofit newsroom, we’re counting on listeners like you to keep this public service paywall-free and available to all.

Support “Marketplace Tech” in any amount today and become a partner in our mission.