A giraffe doing yoga? AI model transforms words into visualizations.

Sometimes, innovative ideas can be hard to visualize when you don’t have an image in front of you.

Take this description: a 300-foot metal construction with “lattice work girders.” Not too exciting, right? But that’s the original text explaining the design of the Eiffel Tower.

Like a sketch of the soaring tower, imagery can help ideas get off the page and into reality.

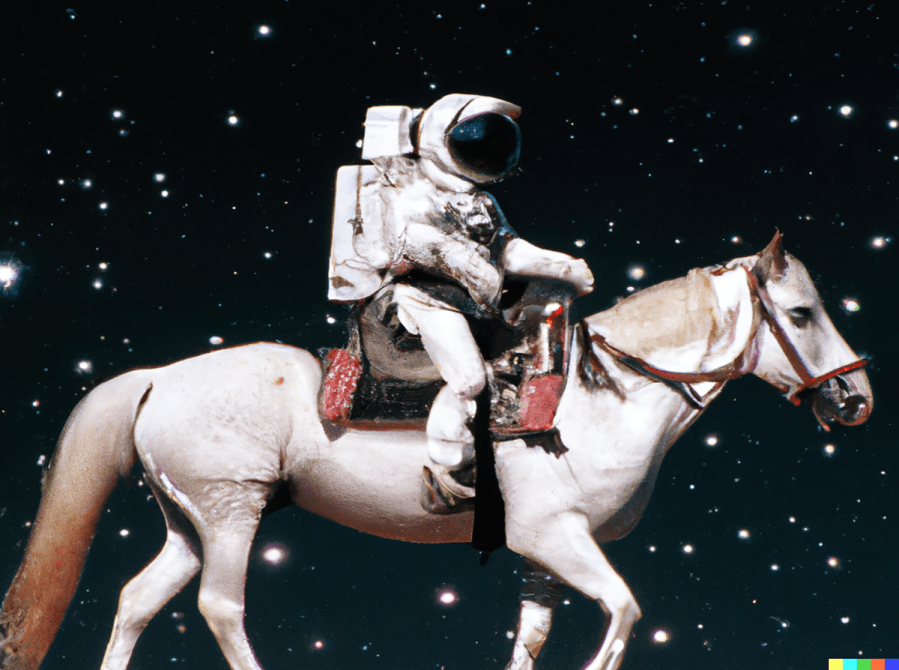

Now, there’s a machine learning program to facilitate that process. It’s called DALL-E, and it renders images using text prompts.

“DALL-E has been especially valuable and important in its ability to make storytelling more efficient and to help people be more creative,” said Joanne Jang, product manager for DALL-E. “It can visualize the concepts in your head, help you see things from a different perspective and offer you interpretations and details that you may have never thought of before.”

So, how does it work?

OpenAI, the research company that owns and operates DALL-E, compiled a database of more than 600 million licensed images and captions. Then, programmers trained the model on relationships between the text and the visuals.

Jang explained that programming DALL-E was similar to teaching a child with flashcards.

“If you show the child multiple different images of people doing yoga and tell them this is yoga, at some point they’ll learn that yoga might involve certain poses, a yoga mat,” she said.

Jang said the same applies to giraffes. The model can learn that giraffes have long necks or spots.

And the model can blend images together based on text commands.

“DALL-E learns these concepts, and then how these concepts can relate to each other. So that now you can ask it for a photo of a giraffe doing yoga,” she said.

So, when artificial intelligence can create images that look like real photos, what’s to stop people from misusing it, producing deepfakes, creating violent or racist content? Open AI omits images of celebrities and violent or sexually explicit content from the database to prevent misuse. But bias can still creep into the model.

For example, OpenAI researchers discovered that images generated using the term “CEO” brought up mostly white, cisgender men. They’ve since updated the system to make it more likely that results will include women and people of color.

It’s also why, Jang said, the company relies on feedback from users.

“They’ve been very helpful in terms of helping us understand where the model falls short so that in future training, we can improve the model by teaching these concepts and filling the gaps in knowledge,” she said.

Giraffes doing yoga is a more playful example. But DALL-E is also used by software engineers to design logos, digital artists at magazines like Cosmopolitan to produce cover art and people like Zach Katz, a musician and safe streets activist. He uses the model to produce renderings of safer streets.

“Because renders are so powerful in showing people what’s possible, I think [the images will] sort of act as a force multiplier for safe streets activism,” he said.

Katz runs the Twitter account Better Streets AI. His more than 20,000 followers suggest new kinds of streets for the model to render. Katz has the creative freedom to transform car-centric boulevards by adding bike lanes, gardens or pedestrian pavilions.

Zach Katz’s DALL-E 2 rendering of a “better” Thayer Street in Providence, Rhode Island. (Courtesy Katz)

He said it’s an affordable, accessible way to offer a visualization of safer streets.

“I would like hire artists to do it, but that would cost like hundreds of dollars,” he said.

Now, Katz can produce these renderings at roughly 13 cents each and publish them on his Twitter feed. So far, he’s done more than 60 using DALL-E 2, which is the latest version of the model.

OpenAI is gradually rolling out the program to paid subscribers and working through a waitlist. So it might be a while before just anyone can use it.

Related links: More insight from Kimberly Adams

The folks at DALL-E invited us to submit our own text prompts for the model, and we did. We have DALL-E’s interpretation of a potato playing a banjo, which is frightening and, maybe, endearing? It’s all a matter of taste … I guess. We’ve also got a cat in a Christmas parade holding a lizard in its mouth.

Meanwhile, TechCrunch has a story on some of the other companies that have used DALL-E’s tech. Including one not-so-techie client: Heinz, the ketchup company. It used DALL-E for a recent ad campaign asking people to submit their own prompts based on ketchup. Think ketchup meets the Renaissance.

OpenAI is not the only company in the text-to-image-generation space. Google has its own version called Imagen, which relies on chunks of text rather than images for its database. Because of the ethical concerns around how this technology could be used, Google hasn’t provided the code or a public demo for Imagen.

Google has a research paper and a homepage where you can view some of the renderings.

OpenAI has publicly accessible research on DALL-E 2, including a blog post on the company’s methods for managing bias in the model, which it updated in July.

The technology magazine IEEE Spectrum has a piece on how bias shows up in some of the image outputs from DALL-E. Like the term “journalist” prompting only males in the results, which is clearly inaccurate. Plus other irregularities, like the model having difficulty inserting actual text into images and producing pictures with warped faces.

It means that at least for now, some art is best left to humans.

The future of this podcast starts with you.

Every day, the “Marketplace Tech” team demystifies the digital economy with stories that explore more than just Big Tech. We’re committed to covering topics that matter to you and the world around us, diving deep into how technology intersects with climate change, inequity, and disinformation.

As part of a nonprofit newsroom, we’re counting on listeners like you to keep this public service paywall-free and available to all.

Support “Marketplace Tech” in any amount today and become a partner in our mission.